Last updated: April 7, 2026

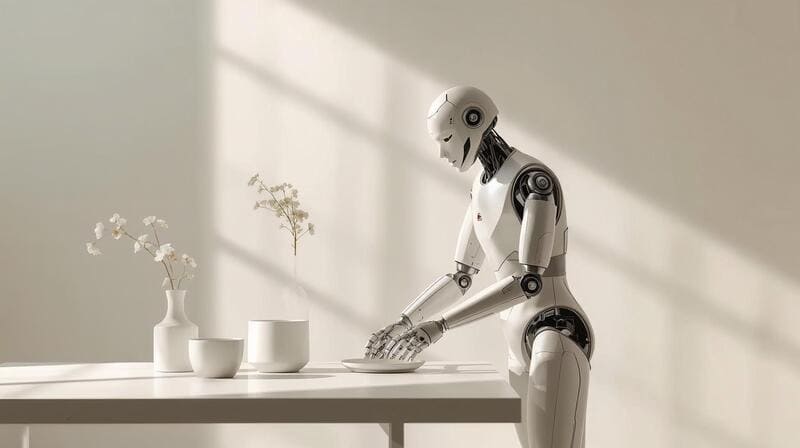

For decades, robots were viewed as machines built for repetitive tasks: assembling car parts, moving packages, or running fixed code. But 2025 marks a turning point. Google DeepMind has unveiled breakthrough models that allow robots to perform delicate, context-driven actions – from folding origami to setting a table based on voice instructions. This isn’t just automation anymore; it’s the dawn of AI-powered dexterity.

From Origami to Table Setting: Precision in Motion

DeepMind’s new Gemini Robotics models raise the bar for how robots interact with the physical world. Instead of executing rigid commands, these robots combine multimodal AI with natural language processing and visual understanding. That means they can fold intricate origami, close Ziploc bags without tearing them, and even set a dining table after being told, “Prepare the table for four.” By blending fine motor skills with contextual understanding, robots are moving closer than ever to human-level adaptability.

According to Engadget, Gemini-powered robots completed real-world tasks with an 85% higher success rate compared to earlier models.

From Controlled Labs to Real-World Impact

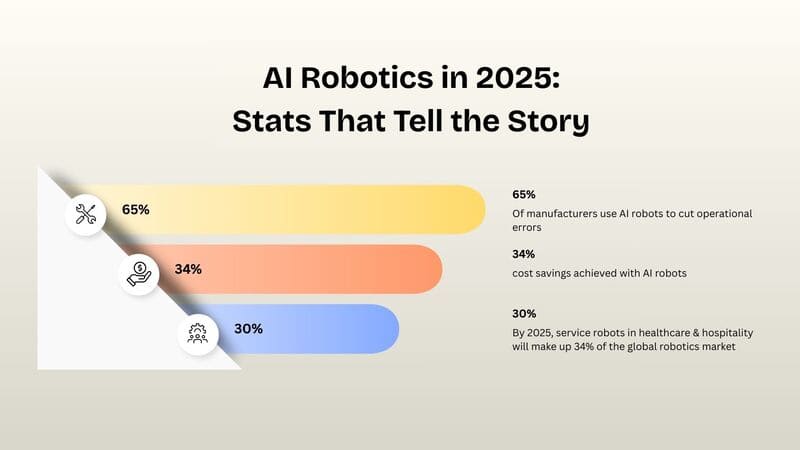

Until now, robots excelled mainly in controlled environments. DeepMind’s innovations are pushing them into homes, hospitals, and offices. The impact is already visible: more than 65% of manufacturers worldwide use AI-driven robots to reduce operational errors and cut costs by nearly 30%. By 2025, service robots in industries like healthcare and hospitality are expected to represent 34% of the global robotics market, valued at over $72 billion. In healthcare, Gemini robots are being tested for tasks such as preparing surgical equipment and assisting elderly patients, where delicate and adaptive movements are essential.

Human-Robot Collaboration: Voice as the Bridge

The most exciting part of this evolution is how humans interact with robots. Instead of programming, you simply talk. You might say, “Place the spoon to the right of the plate,” or “Pack a lunch box with fruit, a sandwich, and a drink.” The robot interprets your intent, processes the environment in real time, and executes – without the need for step-by-step coding. This ease of interaction lowers the barrier to entry, making robotics accessible not only to businesses but also to everyday households.

The Bigger Picture: Robots With Brains and Hands

DeepMind’s Gemini Robotics shows us that the future of robotics lies in merging AI cognition with physical dexterity. These robots don’t just move – they learn new skills quickly without months of retraining, adapt dynamically to changes in objects, layouts, or commands, and collaborate safely with humans by understanding context and intent. In short, they are no longer just programmed workers but autonomous agents with both brains and hands.

Looking Ahead: A World of Adaptive Robots

As AI-powered robots become more common, their roles will expand across industries and everyday life. In hospitality, they’ll be capable of preparing rooms and creating personalized dining experiences. In healthcare, they’ll assist with surgical preparation and support patient mobility. And in our homes, they’ll help with chores, errands, and even creative activities like cooking. The line between human capability and robotic assistance is blurring – and it’s this seamless integration that makes the shift so revolutionary.

Frequently Asked Questions (FAQ) About AI in Robotics

What makes Google DeepMind’s breakthrough so special?

It’s the first time robots combine complex physical dexterity with real-time understanding of voice and vision.

Where will these robots be used first?

Healthcare, hospitality, and manufacturing are the leading sectors due to the need for precise, adaptable tasks.

Are robots like these safe to use around people?

Yes. Gemini robots are trained to understand human intent and avoid unsafe actions, making them safer collaborators.

How do they learn new skills?

By leveraging multimodal AI – integrating voice, vision, and context – they can learn from examples and adapt in real time.

What’s the biggest challenge ahead?

Scaling production while keeping costs manageable, along with addressing ethical concerns in sensitive industries.

Are service robots really gaining traction?

Absolutely. They already account for 34% of the global robotics market, particularly in healthcare and hospitality.

What does the future hold for AI in robotics?

Robots that don’t just follow commands but anticipate human needs, integrating naturally into our workplaces, hospitals, and homes.

Related Reading

Shortcuts in Drug Discovery with AI