Artificial intelligence is transforming everything – business, creativity, and daily life. But with great power comes ethical responsibility. When companies cut corners on privacy, transparency, or fairness, regulators step in. Let’s look at what’s happening, who got caught, and how to build AI that earns trust instead of fines.

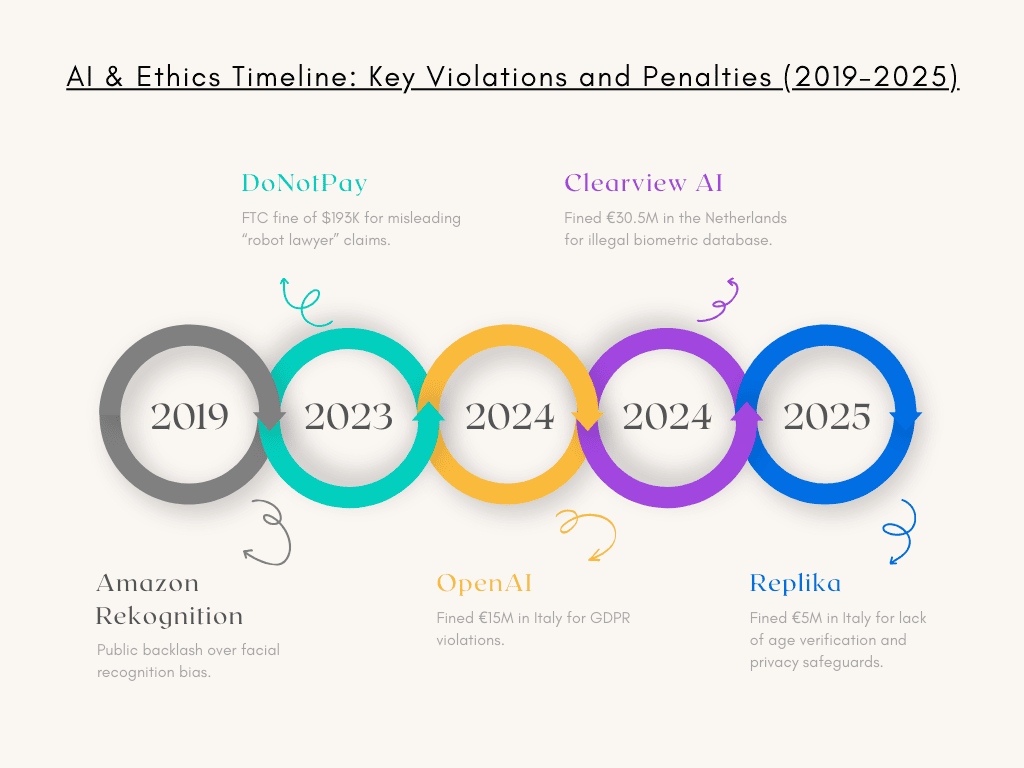

Real Violations: What Happened and the Penalties

- OpenAI (2024) – fined €15M in Italy for collecting personal data without a proper legal basis and a lack of transparency.

- Clearview AI (2024) – fined €30.5M in the Netherlands for building an illegal biometric facial recognition database scraped from the web.

- Replika (2025) – fined €5M in Italy for insufficient age verification and privacy safeguards.

- DoNotPay (2023–24) – fined $193K in the U.S. for misleading claims about being a “robot lawyer”.

- Amazon Rekognition (2019) – faced major public backlash for severe bias in facial recognition, particularly misidentifying women and people with darker skin, leading some U.S. police departments to stop using the tool.

These cases are more than punishments – they’re warnings. Ethical mistakes cost money, reputation, and public trust.

Regulation: Where Things Stand and What’s Coming

- The EU Artificial Intelligence Act, which came into effect in August 2024, sets strict rules for high-risk AI systems. It requires transparency, human oversight, and risk assessments, with fines up to €35M or 7% of global revenue for serious violations.

- GDPR remains the foundation for privacy in the EU, requiring a legal basis for data use, clear transparency, and protection of sensitive data such as biometrics and geolocation.

- In the U.S., there’s no single federal law like GDPR, but enforcement is rising through agencies like the FTC, which targets misleading claims, deceptive practices, and privacy violations (FTC).

Ethical Challenges: Bias, Fairness & Trust

Beyond fines and regulation, ethical questions lie at the core of the AI debate. The case of Amazon Rekognition (2019) revealed just how damaging algorithmic bias can be. The system showed significantly higher error rates in identifying women and people with darker skin, sparking a broad public debate about fairness in biometric technologies and leading several U.S. police departments to suspend its use. Such examples illustrate how bias in training data can result in unfair outcomes in hiring, lending, or law enforcement. At the same time, a lack of transparency often turns AI into a “black box,” making it difficult to explain or audit decisions. And when human oversight is missing, errors or misuse can quickly scale, causing widespread harm before anyone has the chance to intervene.

Building an Ethical Future for AI

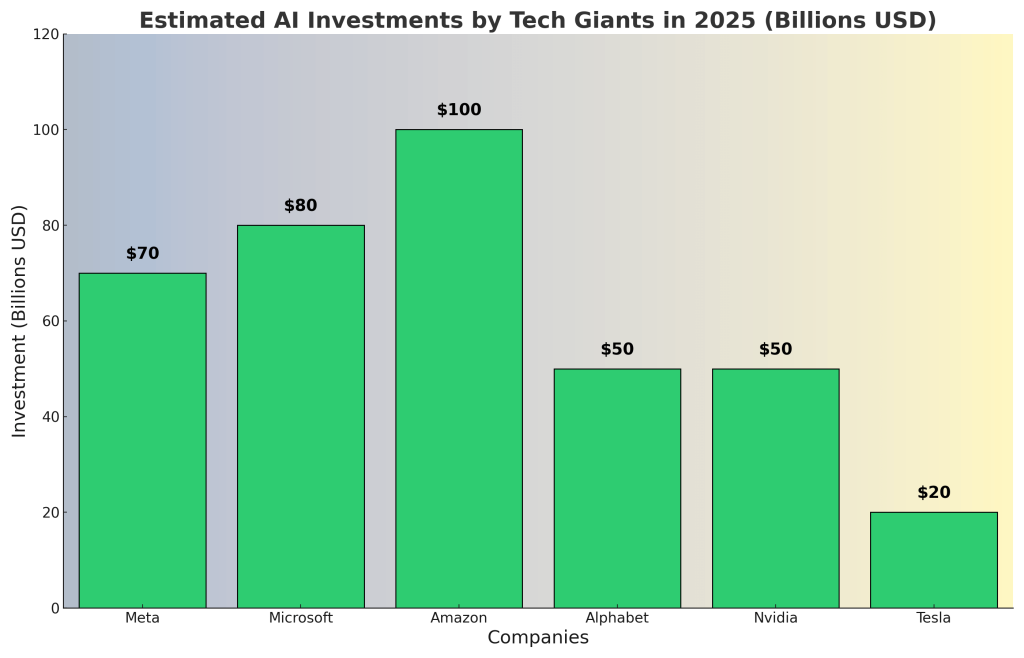

To ensure AI develops in ways that serve humanity, companies must ground their systems in strong ethical foundations. That begins with a clear legal basis for data use, along with full transparency so users understand what is collected and why. Protecting younger users through strict age verification and safeguards, and maintaining continuous monitoring with AI-DR (AI Detection & Response) tools, ensures risks are caught early. At the same time, fairness requires diverse training data that minimizes bias, while staying aligned with global regulations helps keep systems accountable. But ethics is not just about avoiding fines – it’s about ensuring AI becomes a force for good. When designed responsibly, AI can empower creativity, improve healthcare, enhance education, and make daily life more seamless, all without undermining trust or human dignity. The true challenge – and opportunity – is to build AI that doesn’t just work for business, but works for people and the world they live in. This broader picture also connects to U.S. policy – particularly presidential support for AI, which is shaping future investments and opportunities.

Frequently Asked Questions (FAQ) About AI & Ethics

1. Why is AI ethics so important today?

Because AI systems influence critical decisions in healthcare, hiring, law enforcement, and everyday life.

2. What’s the biggest risk of unethical AI?

The combination of bias and lack of transparency – scaled mistakes can cause enormous social harm.

3. What are some real-world examples of AI companies that faced penalties?

OpenAI (€15M, 2024) – for collecting data without a legal basis.

Clearview AI (€30.5M, 2024) – for creating an illegal biometric facial database.

Replika (€5M, 2025) – for failing to implement proper age verification and privacy.

DoNotPay ($193K, 2023–24) – for misleading claims about being a “robot lawyer.”

Amazon Rekognition (2019) – faced public backlash for severe bias in facial recognition.

4. Is there a global regulation for AI?

Not yet. The EU AI Act is the most comprehensive framework so far.

5. What is the EU AI Act?

A regulatory framework requiring transparency, human oversight, and banning dangerous practices, with fines up to €35M or 7% of revenue.

6. How can companies reduce AI bias?

By using diverse datasets, performing regular bias audits, and involving human oversight.

7. Can children safely use AI tools?

Yes – but only if there are strict safeguards, parental controls, and data minimization.

8. What role does the FTC play in AI ethics?

It enforces rules in the U.S. against misleading AI claims and privacy violations.

9. Why was Amazon Rekognition controversial?

Because in 2019 it misidentified women and people with darker skin at high rates, raising discrimination concerns.

10. What’s the future of AI ethics?

More global regulations, stronger corporate accountability, and rising user demand for trust and transparency.

Related Reading

U.S. Presidential Support for AI in 2025